We hear a lot about new tech like AI and machine learning and implementing a devops process on the multi-cloud. But we don’t hear much about the hardware innovations that is making it all happen. I was fortunate enough to hear from Intel and their customers during the latest Tech Field Day about their Optane Memory and CXL (Compute Express Link) strategy. I’m here to tell you, storage will never die!

Basics

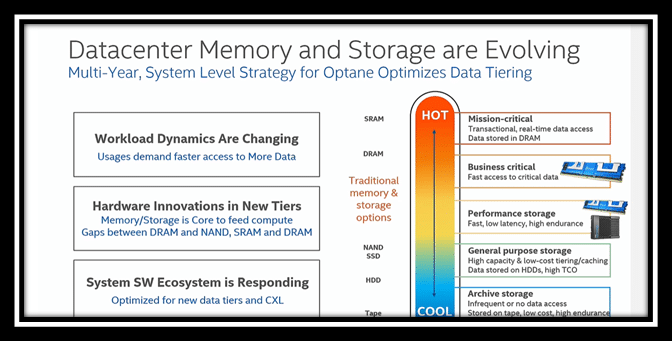

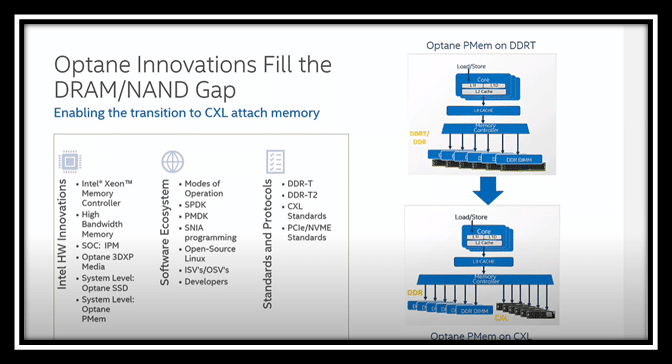

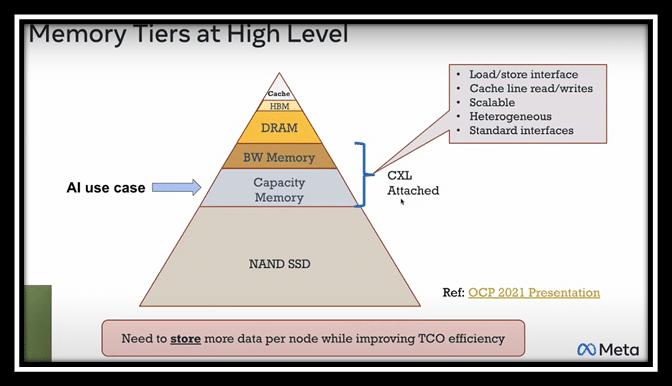

Intel’s view of the market is data tiering, and they focus on workloads. They have been working to bring a cost-effective scalable architecture for tiering. They are in the 4th generation of the Optane product line, and think this version is an excellent in-between tier for DRAM and NAND.

To keep everyone on the same page, DRAM manages data and is volatile (requires power), while NAND stores data is non-volatile (doesn’t require power). You can find more definitions and market descriptions in this presentation on semiconductors.org.

But this entire market is changing as workloads change. Intel has focused on optimizing the tier the area between DRAM and NAND since workloads are demanding faster and faster access to data. One reason is that core counts keep increasing, and memory is needed to fill those compute cores. All the slides and content in this section come from Intel’s first #TFD25 session.

Intel has built a multi-year strategy so they can support any type of workload – probably some that haven’t even been thought of yet.

What is CXL

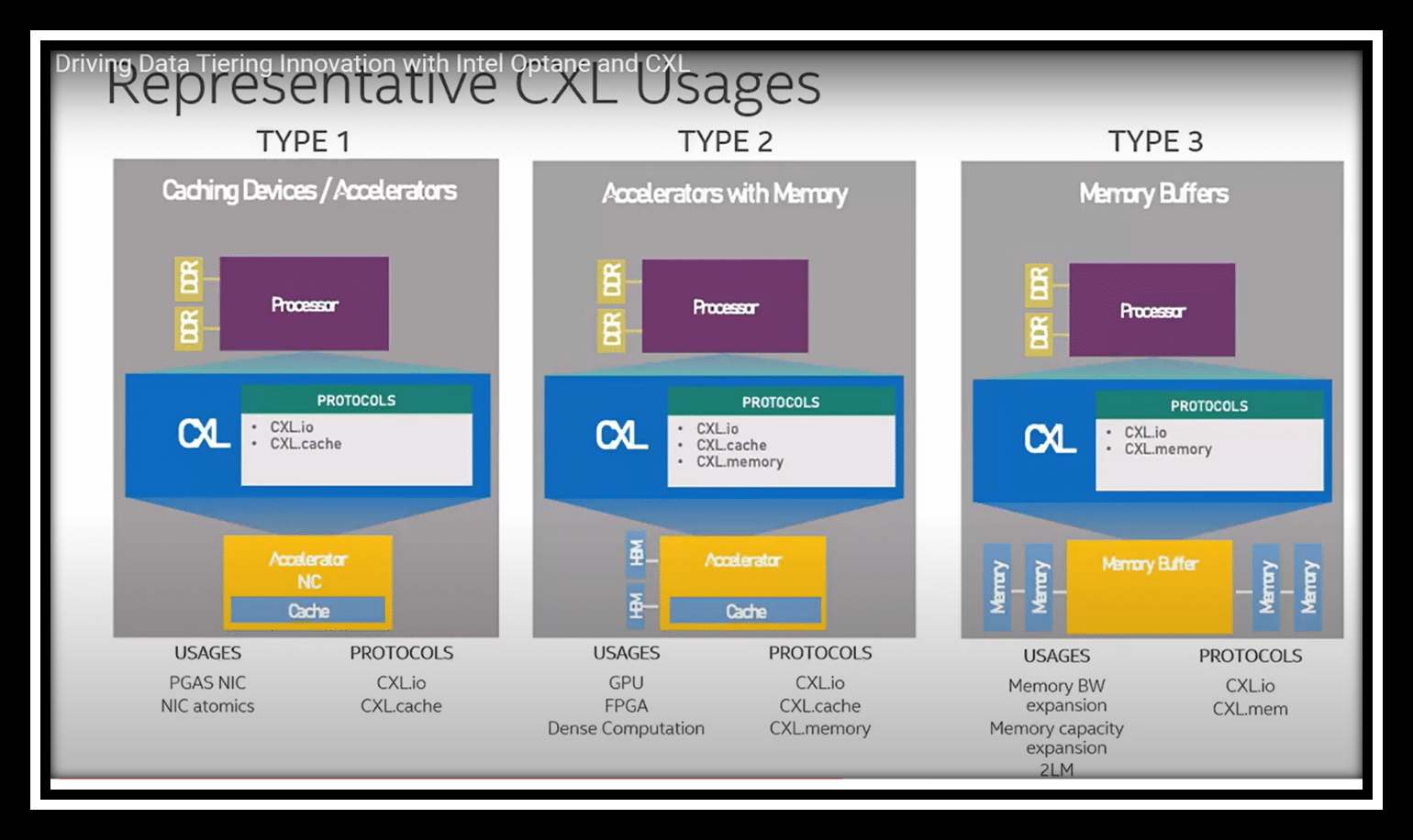

CXL is Compute Express Link. It is a way to attach accelerators to hosts, supported by an industry consortium of about 150 companies. It’s a processer à to accelerator link that sits atop and co-exists with PCIe Gen 5 infrastructure. It enables efficient resource sharing, improves data handling, and reduces bandwidth.

This shows some of the ways CXL can be used:

Since they took a multi-year approach, PMem programming model doesn’t change, and apps written to the SNIA standard will still work. Since the consortium is so large, there are several existing use cases, and these should transition easily.

How public cloud companies are using it

I don’t think I can express to you how shocked we were that Intel brought their customer Meta along to talk about AI workloads.

WE WERE SO SHOCKED.

It took us a while to center our operations-centric brains and the fact that we don’t know a ton about how Meta works on this level.

DLRMs build the recommendation systems for platforms like Meta. This type of deep learning model needs to work with sparse categorical data that is used to describe higher level attributes. This blog post describes the model in detail. I’d like to really thank Ehsan Ardestani for being patient with our questions as we oriented ourselves to his presentation.

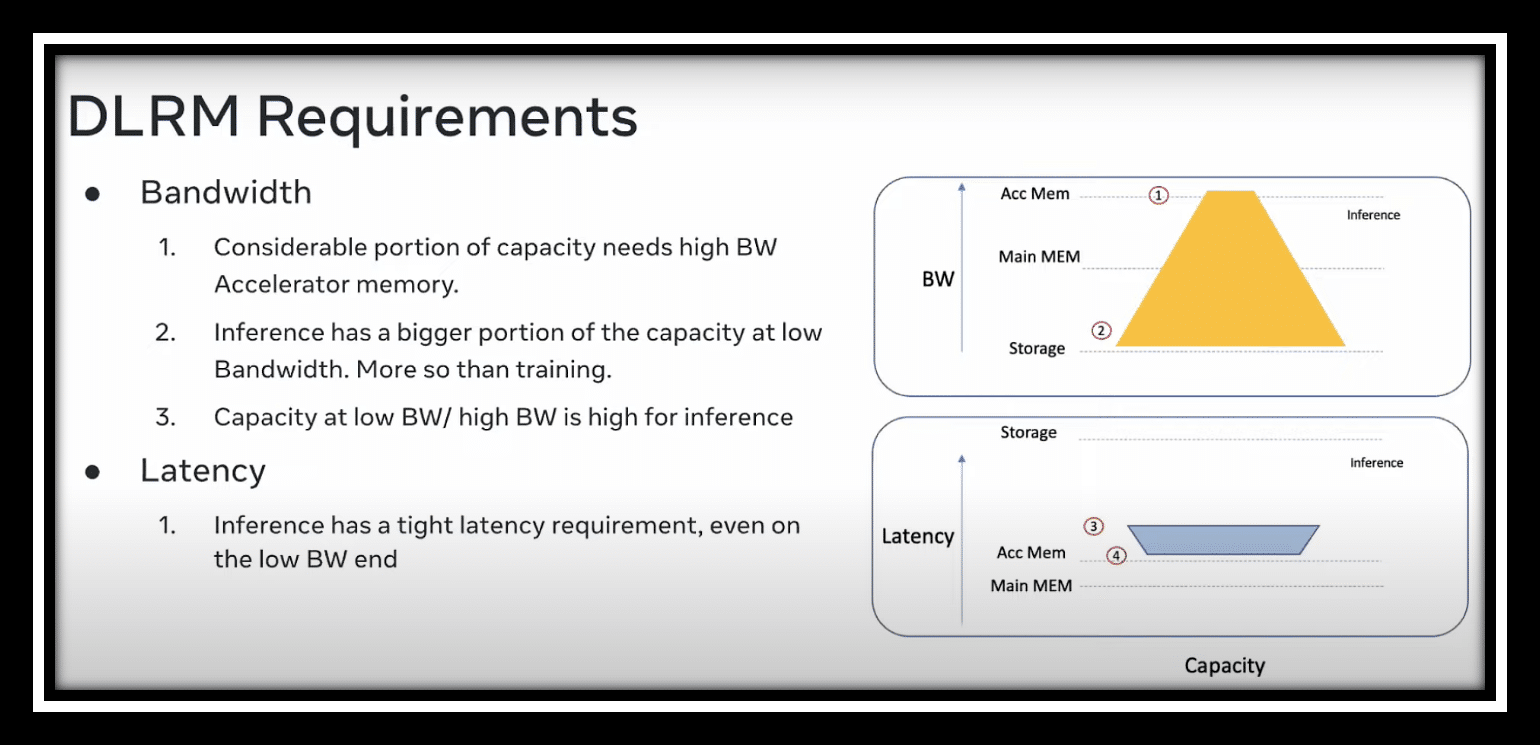

This is where things got interesting. Here are the operational requirements of Meta’s DLRM:

But there were other requirements, such as saving power with simpler hardware, saving power by avoiding scale-out. One thing I found interesting was that for any query, the entire model is required (meaning that the query must go through multiple hosts). So avoiding scale-out has nothing to do with any hot topics that may happen. However, scale-out has everything to do with the machines that are required to serve the DLRM. And this is exactly why they take advantage of CXL..

Real talk

Traditional ops skills aren’t going away, but they are evolving. AI isn’t a new field, but it is new to using virtualization and things like CXL. But if you’ve been a storage engineer or a VMware admin, you have the basic skills to do this! VMware announced their support for CSX last year as Project Capitola. I wonder if this will end up as a product release this year?

Expand your skills to support these new workloads. Your expertise is needed!